A mother – and a computer – can differentiate a baby’s cry

Brahnam, a professor of computer information systems at Missouri State University, has multiple interests in the field of technology. She collaborates on many of these projects with Dr. Loris Nanni from the Università di Bologna in Italy – a collaborator she has never met face-to-face.

One of her many research projects was to develop the machine learning algorithm called the Infant Classification of Pain Expressions. ICOPE was designed to recognize distressed facial expressions in neonatal babies, and it was the first of its kind. Infants at Mercy hospital were photographed while they were experiencing a number of benign nonpain stressors and an acute pain stimulus (the heel lance needed for the state-mandated blood exam).

“Are they in pain or not? You can’t tell because they cry all the time,” joked Brahnam. Furrowed brows and certain mouth or eye shapes can be tell-tale signs, but this system helps to identify pain even if nurses are busy or face blind.

Now she’s preparing to look deeper with the use of video equipment which will not only see the expressions but will measure heart rate, respiration rate or even changes in pixel colors to enhance the ability to classify pain expressions.

Cry without pain

The expressions in these photos were used to help Brahnam develop the Infant Classification of Pain Expressions (ICOPE) system. Infants at Mercy hospital were photographed while they were experiencing a number of benign nonpain stressors and an acute pain stimulus. Photos provided by S. Brahnam.

Technology can be art

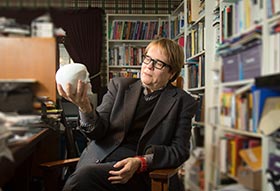

Photo by Bob Linder

As a former artist, Brahnam has always been interested in faces and expressions, so when she went back to school, her dissertation actually was to build an artificial artist. In order to do so, she had to train a computer to recognize specific traits and teach it to see people like human beings see people.

First, she developed a dataset of faces that people judged according to a set of traits, and then trained machines to recognize people that looked trustworthy versus untrustworthy, dominate versus submissive, and so on.

“I used facial recognition technology to classify faces according to people’s impressions of faces. I then produced an algorithm to generate from this model novel faces that were calculated to produce specific impressions on people. Artists do something similar when they draw people,” she said. “In this research I was able to show that it was possible for machines to see the social meanings of a person’s physical appearance and reproduce it – something a chatbot might want to do. People adjust their appearances for different occasions. Why not chatbots?” This is a technology Brahnam calls smart embodiment.

But how do you teach a computer?

It all starts with building an algorithm, a training set and a testing set. You begin by showing the computer something from the training set – handwritten letters, for example. The system will guess based on the information pre-programmed in the algorithm. If incorrect, adjustments are made until it can guess correctly. According to Brahnam, the testing set is used to see how well the computer can extrapolate information or how well it can learn when given something new.

“We now live in a world of lots of data – huge stock piles of data. Our brains have not evolved in a big data environment. We can’t handle lots of different data and this is where machines can be very good.”

A ‘cathedral of classifiers’

While processing lots of big data isn’t a human’s forte, Brahnam noted that we are more capable of distinguishing patterns than machines, especially in noisy environments, “because we have this evolutionary history behind us.” Infants and toddlers, she explained, find recognizable patterns, shapes and letters even in a cluster of clutter.

Much of her work deals with developing and improving multi-classifier systems that have many different learning algorithms working together on lots of different data. For example, to detect cancer, there could be a machine with many classifiers running simultaneously, each individually responsible for analyzing demographics, imagery, laboratory readings or instrumental readings. Putting the analyses together, a doctor could get a full picture of whether cancer was present.

“If we have gigantic systems working together, we think that we can make a general purpose classifier system, at least for certain classes of problems. That’s another thing that we are working really hard on: this gothic cathedral of classifiers.”

One way that a multiclassifier system works is for different classifiers to look at different aspects of a problem or data set. The different conclusions are then averaged for a final decision. The story of the blind men and the elephant illustrates the value of this approach. Some people touch the tusk of an elephant and say, “Hmm. It’s made out of stone.” Another person touches the tail and says, “No, it’s fluffy. I think this is the end of a drapery.” Another person hits the legs and says, “This is definitely a tree trunk.” That would be kind of like the classifier systems. Each one is getting different information that if you combine the total picture, you might get an elephant.

- Story by Nicki Donnelson

- Main photo by Bob Linder